· Hernán Pérez Rodal · Engineering · 6 min read

Agentic Compliance System with LangGraph: patterns that work in production

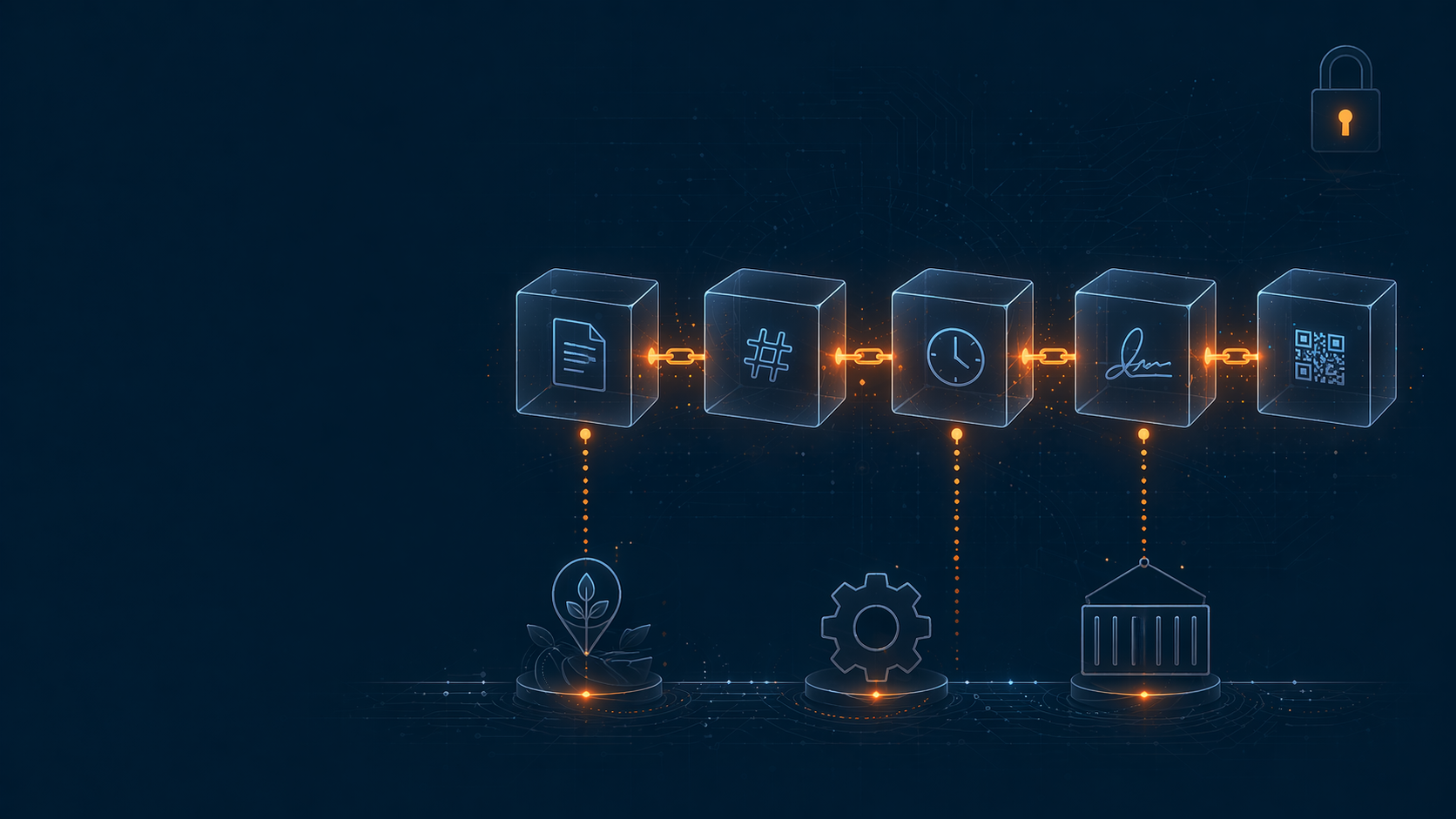

Not every multi-agent pattern survives regulated domains. We share the agent architecture we use at Darwin, why, and which anti-patterns we avoid.

TL;DR — Agent tutorials talk about “autonomous AI” as if it were a magical superpower. In regulated production, autonomy without guardrails is a legal nightmare. At Darwin we run a multi-agent system with LangGraph, and the value isn’t in autonomy — it’s in the deterministic orchestration of LLM-powered components.

The problem

Darwin processes thousands of compliance cases per day over food traceability data. Every case requires:

- Interpret a regulatory question (free text from user or system)

- Decompose it into sub-queries (regulatory, operational, evidence)

- Delegate each sub-query to the right agent

- Synthesize the results into an answer with gap analysis + risk scoring

- Validate before presenting — especially numeric data

Trying to make a single LLM do everything end-to-end with one giant prompt fails in production. Models get confused, hallucinate data, lose intermediate context. When the output goes to an FDA audit, “it was a bit off” isn’t acceptable.

The pattern we use: supervisor + specialized workers

The architecture is:

[Supervisor]

│

┌──────────┼──────────┬──────────┐

│ │ │ │

[Research] [Validation] [Analytics] [Reporting]- Supervisor — decides which agents to invoke, in what order, and when to stop

- Research Agent — fetches regulatory context + traceability events (uses the hybrid RAG I described here)

- Validation Agent — crosses deterministic business rules with Research findings

- Analytics Agent — runs structured queries + anomaly detection on events

- Reporting Agent — synthesizes everything into a consumable output (PDF report, JSON API, UI view)

The supervisor is the critical piece. It’s the one that decides, not the workers.

Why LangGraph and nothing else

We evaluated several options before picking LangGraph:

| Framework | Why not / why yes |

|---|---|

| LangChain Agents (AgentExecutor) | Too magical. Hard to debug in production. |

| CrewAI | Good for prototypes. Abstraction too high for specific patterns. |

| AutoGen | Strong at multi-agent conversations. Less ideal for deterministic flows. |

| Custom state machine | Considered. For our case, LangGraph delivers 80% with 20% of the code. |

| LangGraph ✅ | Explicit state machine + checkpoints + streaming. Debuggable, production-grade. |

The key: LangGraph is not “an agent framework”. It’s a state machine with superpowers for LLM calls. That mental shift changes how you use it.

Patterns that work

1. Deterministic supervisor, not autonomous

Our supervisor does NOT ask the LLM “what do I do now?” at every step. That’s magical autonomy and it’s an anti-pattern.

What it does do:

def supervisor_node(state: ComplianceState) -> str:

"""Decide next node based on state — deterministic rules first, LLM second."""

if not state.query_classified:

return "classify_query"

if state.classification == "regulatory" and not state.regulatory_context:

return "research"

if state.has_numerical_claims and not state.validated:

return "validate"

if state.ready_for_report:

return "report"

return "END"The LLM only enters individual nodes (classify, research, validate). Routing is code. It prevents an LLM from “deciding” to skip a critical validation step.

2. Aggressive checkpointing

Every step emits a checkpoint. If something fails, we don’t re-run everything — we resume from the last good state. Critical when a case touches 5-10 nodes and every LLM call costs time+$.

from langgraph.checkpoint.postgres import PostgresSaver

graph = builder.compile(

checkpointer=PostgresSaver(conn_string=DATABASE_URL),

interrupt_before=["report"], # Pause before final report

)3. Configurable human-in-the-loop

Some cases are auto-approve (routine), others require review (high-stakes). We use interrupt_before in LangGraph to pause before nodes we decided require human intervention.

The operator reviews, approves or edits, and the graph resumes from the checkpoint. No lost work.

4. Tool use with structured validation

When an agent calls a tool (query DB, fetch document, compute metric), every output is validated against a Pydantic schema before going back to the LLM. If the tool returns something unexpected, the error is handled explicitly — we don’t pass it to the LLM that will make things up.

class TraceabilityQueryOutput(BaseModel):

events: list[CriticalTrackingEvent]

total_count: int

date_range: DateRange

# Tool response validated. If fails → retry or escalate, NO LLM hallucination.5. End-to-end observability

Every node emits tracing with OpenTelemetry:

- Input state

- LLM prompt + response

- Token usage + cost

- Latency

- Tool calls (with inputs/outputs)

Without this, debugging an agent system is impossible. With it, you find why a case went wrong in minutes.

Anti-patterns we avoid

❌ Full supervisor autonomy. “The LLM decides when to stop” = runaway loops + surprise costs. Our supervisor has max iterations per node and timeout per case.

❌ Long agent-to-agent conversations. Tutorials show N agents “discussing”. In production, every exchange is $$$ and latency. We use single message per agent, not conversation.

❌ Unstructured shared global memory. Every agent writing to a shared dict → race conditions + hallucination about what the current state is. We use pydantic-typed state with explicit mutations.

❌ LLM-as-everything. Many tutorials use an LLM for things a deterministic rule solves. General rule: if you can write it as code, write it as code. The LLM only for things that genuinely require natural-language reasoning.

What didn’t work

V0: a single agent with tool use — we tried one Claude agent with access to all tools. Failed because the agent sometimes skipped critical validations when the prompt got long. We refactored into supervisor + workers.

Continuous UI streaming — we wanted to stream supervisor decisions to the UI in real time. Turned out confusing for users (they saw “research → validate → research → validate” with no idea why). We switched to showing only the main milestones and hiding internal orchestration.

What did work

Deterministic supervisor — zero runaway loops since we implemented it.

PostgreSQL checkpointing — we can resume cases that were left half-done by infra outages or API quotas.

Validation agent with rules + LLM — combining hardcoded rules + LLM-as-judge for edge cases gives better accuracy than either alone.

Per-agent metrics — we know exactly which agent is the latency or cost bottleneck. That lets us optimize selectively (e.g., swap the Reporting Agent to a smaller model without touching Research).

Lessons learned

- Agentic ≠ autonomous — the best patterns are deterministic with selective LLM calls

- LangGraph is a state machine, not an agent framework — think of it that way and everything simplifies

- Aggressive checkpointing from day 0 — adding it later is expensive

- Validate tool outputs with schemas — never pass unverified data to the LLM

- Tracing + cost metrics per node — without them, no real iteration

What’s next?

We’re exploring reusable sub-graphs — our Validation Agent is generic enough to use in other flows (not just compliance). LangGraph supports graph composability, which opens the door to an internal catalog of patterns we can remix.

If you’re building AI agents in production and are surprised your system is slower/more expensive/more unstable than your demo — it’s probably a lack of deterministic orchestration. Start there.

Are you building something similar? Let’s talk — we’re interested in sharing architecture and patterns.