· Hernán Pérez Rodal · Engineering · 3 min read

RAG over 10+ databases: what production taught us

Why vector-only RAG doesn't scale in compliance, how we designed hybrid retrieval across multiple stores, and the architectural decisions that worked in production.

TL;DR — In 2026 the consensus is clear: vector-only RAG doesn’t scale in production. At Darwin we built an agentic compliance system with hybrid retrieval across 10+ databases. The best decisions we made had nothing to do with the model — they were about the data layer.

The problem

Our agentic compliance system has to answer questions like:

“Which mango lots from producer X, processed between May and July, met the CTEs required by FSMA 204, and which ones have evidence gaps?”

A single question that combines:

- Regulatory knowledge (FSMA 204 CTE/KDE definitions — structured text)

- Traceability events (CTEs recorded with timestamp, geolocation, supplier, lot)

- Internal business rules (gap analysis, risk scoring — dynamic logic)

- Relationships between entities (producer → plant → shipment → retailer)

A single vector store with embeddings of everything mixed together can’t answer that well. The right answer requires joining structured data + semantic retrieval + aggregate computation.

The architecture

Our retrieval stack:

| Source | Type | Usage |

|---|---|---|

| Qdrant | Vector store | Regulations, doctrine, historical cases (unstructured data) |

| PostgreSQL | Relational | Traceability events (CTE/KDE with timestamps, IDs, geo) |

| Firebase/Firestore | Document | Per-customer config, UI state |

| Cloud Storage | Blob | Original PDFs, audit trails, digital evidence |

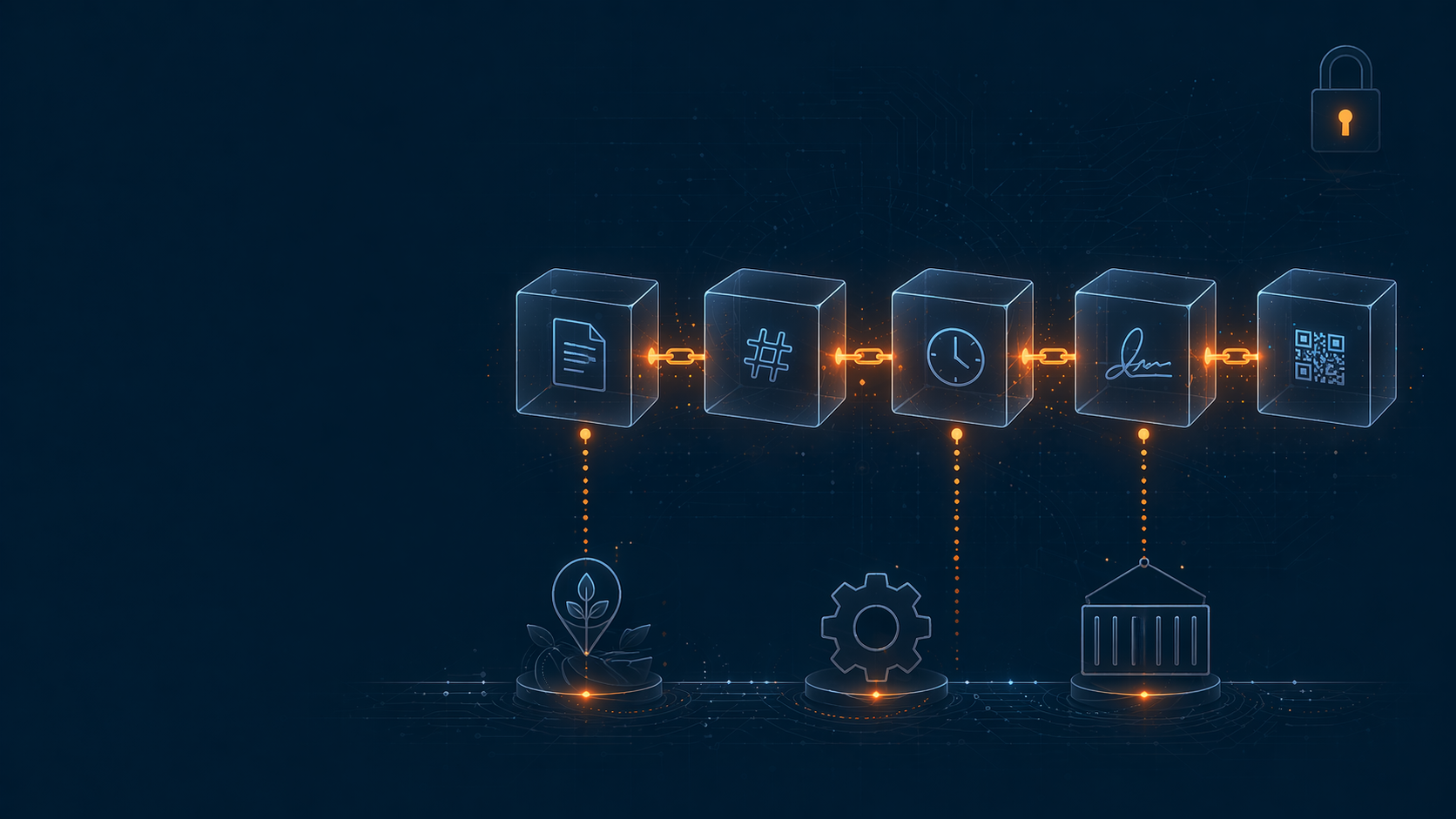

| On-chain (Polygon) | Immutable | Critical attestations, digital signatures |

The agent doesn’t know where each thing lives — the orchestrator resolves it.

LangGraph as orchestrator

We use LangGraph to route queries in multiple steps:

- Classify — what kind of question is it (regulatory / operational / mixed)

- Plan — which retrievals are needed (vector + SQL + graph traversal)

- Fan-out — execute retrievals in parallel

- Synthesize — pass results to the LLM with structured context

- Validate — guardrails to prevent hallucinations on numeric data

The key step was #2: giving the LLM a query planner that decides the retrieval strategy before going to fetch. Without that, the model hallucinates data or pulls in irrelevant context.

What didn’t work

Vector-only with aggressive chunking — our first attempt. It failed on two fronts:

- Counting / aggregation queries (how many lots? weekly average?) — the LLM made up numbers when they weren’t explicitly in context

- Relational joins (producer X + time window Y + certification Z) — impossible without a structured query

The fix wasn’t “better chunking” — it was separating semantic retrieval from structured queries.

What did work

Explicit query routing → the planner decides whether a question requires vector search, SQL, graph traversal, or a mix.

Numeric guardrails → if the LLM’s answer contains numbers, we verify they match what the structured query returned. If not, fail fast instead of returning wrong data.

Semantic caching at the similar-questions level → cuts LLM costs by ~40% without impact on quality.

Full-trace observability with OpenTelemetry → every query is tracked end-to-end (planner → retrieval → LLM → guardrails). Critical for debugging.

Lessons learned

- The bottleneck of RAG in production isn’t retrieval — it’s deciding which retrieval to use

- Numeric guardrails save lives when the correctness of an answer drives regulatory decisions

- LangGraph beats linear chains for orchestrating conditional retrievals

- Multi-store + planner > single vector store with better chunking

- LLMs will hallucinate on structured aggregations — no matter how good the model is

What’s next?

The next iteration is to replace some planner rules with router fine-tuning using real production examples. The planner as an LLM is flexible but expensive — caching its decisions into a smaller model is a logical step.

If you’re building RAG for regulated domains, my advice is: start with the query planner, not the vector store.

Are you building something similar? Let’s talk — we’re open to sharing architecture and learning from other cases.